Helium’s news bias analysis is an easy, empirical measure of a news sources bias powered by an AI (no human input) to identify certain types of language over a large number of articles. Biases in red are typically more toxic than those in orange.

How It Works

Using zeroshot learning, Helium probabilistically classifies news according to the following:

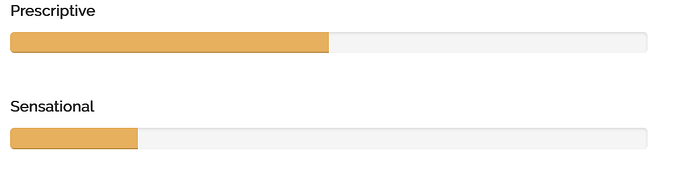

Sensational

Do articles use emotionally charged language (trying to appeal to primitive emotions) instead of objective, descriptive reporting?

Prescriptive

Do articles use prescriptive language (trying to disguise opinions as facts) as opposed to descriptive, epistemically humble language?

Begging The Question

Do articles beg the question (selectively push a certain answer/narrative) as opposed to neutral, truth-seeking journalism?

Appeal to Authority

Do articles appeal to authority/institutions as opposed to reason, facts, logic, and primary sources?

Political

How often do sources publish about political topics? While articles about political topics aren’t bias per se, a higher proportion could indicate more politicization instead of factual reporting.

Subjective

Do articles use subjective, relativistic language as opposed to objective, balanced, fact-based language that acknowledges uncertainty/perspective?

Opinionated

Do articles use opinionated language, as opposed to informative/objective reporting?

Fearful

Do articles use fearful and potentially manipulative language, as opposed to neutral/other emotions?

Oversimplification

Do articles oversimplify nuance into reductionist categories as opposed to factful, context-specific language?

Gossip

Do articles gossip about people as opposed to report impersonal/objective information?

Immature

Do articles use immature language as opposed to mature/truth seeking reporting?

Covering the Response

Do articles report on events themselves, or how other people react/respond to events?

Victimization

Do articles victimize as opposed to discussing events/responsibilities?

Ideological

Do articles use canned/ideological/rigid arguments instead of free, objective thinking?

Circular Reasoning

Do articles use circular reasoning as opposed to logical/deductive/inductive/first-principles reasoning?

Spam

Do articles use spammy/sales/advertising language designed to persuade instead of inform?

Bullish/Bearish

Do articles use loaded language that implies an asset will go up/down?

Double Standard

Do articles use different standards for things that warrant the same standard?

Negative vs Positive Sentiment

Do articles cover a topic in a positive, negative, or neutral/non-emotional light?

Rational vs Irrational

Do articles use rational, logical, empirical thinking or irrational and abstract language?